HAPI FHIR Terminology Server: How To Set Up And Configure

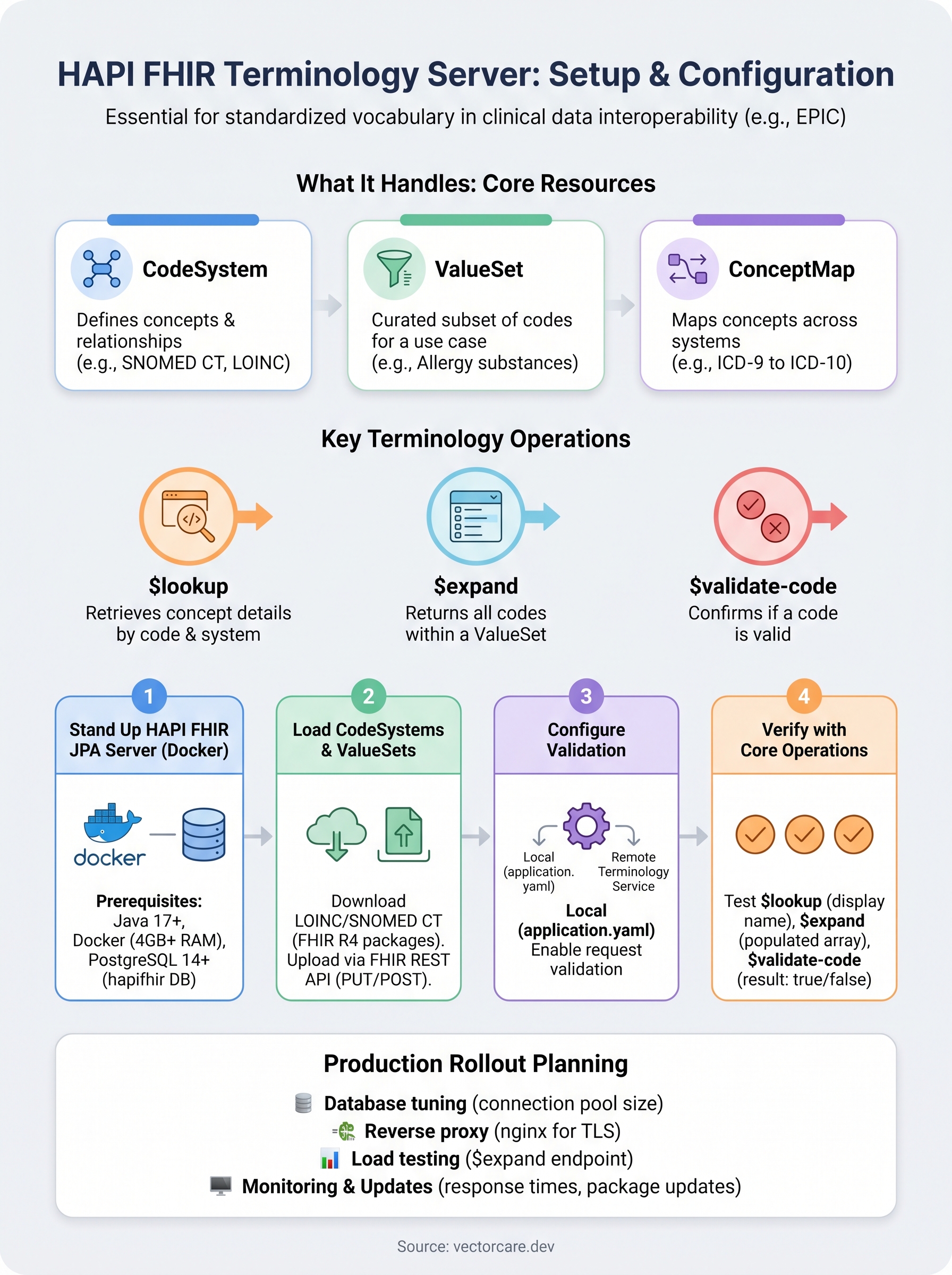

A HAPI FHIR terminology server gives you a dedicated engine for managing code systems, value sets, and concept maps, the building blocks that make clinical data interoperable across EHR systems like EPIC. Without reliable terminology services, your FHIR resources lack the standardized vocabulary needed for consistent data exchange, which quickly becomes a dealbreaker when health systems evaluate your integration.

Setting one up isn't trivial, though. You're looking at Java dependencies, database configuration, terminology package loading, and ongoing maintenance, all before you even get to the EPIC-specific requirements like SMART on FHIR compliance and OAuth flows. For healthcare vendors without deep FHIR engineering teams, this stack of technical demands can stall product launches by months. That's exactly the kind of complexity we built VectorCare Dev SoFaaS to eliminate: our no-code platform handles FHIR configuration, compliance, and EPIC integration so vendors can skip the infrastructure grind.

This guide walks you through the full process of setting up and configuring a HAPI FHIR terminology server, from initial installation to loading terminologies and validating your setup. Whether you're building your own stack or evaluating what it takes before choosing a managed path, you'll leave with a clear, actionable understanding of what's involved.

What a HAPI FHIR terminology server handles

A HAPI FHIR terminology server sits at the center of clinical data interoperability. It stores and serves the coded vocabularies that give your FHIR resources meaning: things like SNOMED CT, LOINC, RxNorm, and ICD-10. When a system sends a diagnostic code, the terminology server validates it, expands related value sets, and confirms the concept exists within the correct code system. Without this layer, your data exchange breaks down because health systems and EHRs like EPIC cannot trust the semantic accuracy of incoming data.

CodeSystems, ValueSets, and ConceptMaps

The three core resource types a terminology server manages are CodeSystems, ValueSets, and ConceptMaps. A CodeSystem defines a set of concepts and their relationships, for example, the full SNOMED CT hierarchy. A ValueSet pulls a defined subset of codes from one or more CodeSystems for a specific clinical use case, such as "all valid allergy substance codes." A ConceptMap translates concepts between different code systems, which becomes critical when you bridge data from a legacy EMR into EPIC's FHIR environment.

| Resource Type | Purpose | Example |

|---|---|---|

| CodeSystem | Defines concepts and their relationships | SNOMED CT, LOINC, RxNorm |

| ValueSet | Curated subset of codes for a use case | Allergy substances, vital signs |

| ConceptMap | Maps concepts across different systems | ICD-9 to ICD-10 translation |

Terminology Operations

Your HAPI FHIR server exposes standardized FHIR operations that external systems call at runtime to validate and expand terminologies. The three you'll rely on most are:

$lookup: Retrieves details about a specific concept by code and system, including display names and parent concepts.$expand: Returns all codes within a given ValueSet, commonly used to populate clinical UI dropdowns inside EPIC.$validate-code: Confirms whether a specific code is valid within a CodeSystem or ValueSet before a resource gets stored.

These operations run against the terminology data you've loaded locally, so the quality and completeness of your imported CodeSystems directly determines the accuracy of every validation call your application makes.

Running these operations in production requires low-latency responses and a well-indexed database backend. Latency at the terminology layer cascades directly into a poor clinician experience inside EPIC, which can sink a health system pilot before it completes review.

Step 1. Stand up HAPI FHIR JPA server

The fastest path to a running HAPI FHIR JPA server is Docker. The JPA server variant is the right choice here because it persists resources to a relational database, which is what a production-grade terminology server requires. The in-memory option won't survive restarts, so skip it entirely for this use case.

Prerequisites

Before you pull any images, confirm your environment has the following in place. Missing any of these will block the setup mid-process.

- Java 17 or later installed and on your PATH

- Docker Desktop (or Docker Engine on Linux) running with at least 4 GB of memory allocated

- PostgreSQL 14+ available, either locally or as a managed cloud instance

- A database named

hapifhirwith a dedicated user and password already created

Run With Docker

Pull and start the HAPI FHIR JPA server using the official image. The command below connects it to your PostgreSQL instance and exposes the FHIR endpoint on port 8080.

docker run -d \

--name hapi-fhir \

-p 8080:8080 \

-e spring.datasource.url=jdbc:postgresql://your-db-host:5432/hapifhir \

-e spring.datasource.username=your-db-user \

-e spring.datasource.password=your-db-password \

-e spring.datasource.driverClassName=org.postgresql.Driver \

-e spring.jpa.properties.hibernate.dialect=ca.uhn.fhir.jpa.model.dialect.HapiFhirPostgres94Dialect \

hapiproject/hapi:latest

Once the container starts, confirm your HAPI FHIR terminology server is running by opening http://localhost:8080/fhir/metadata in a browser. A valid CapabilityStatement response means the server is live.

If the CapabilityStatement returns an error, check your database connection string first; that's the most common startup failure.

Step 2. Load CodeSystems and ValueSets

With your server running, the next priority is loading the terminology content that your HAPI FHIR terminology server will serve. An empty server validates nothing, so this step determines how useful your setup actually is. The two most common clinical terminologies you'll need are LOINC and SNOMED CT, both available as downloadable packages after a free registration on their respective official sites.

Download and Prepare Your Terminology Files

Both LOINC and SNOMED CT distribute their content as FHIR-formatted bundles or package files. Download the FHIR R4 distribution for each. LOINC provides a direct FHIR package download after login. SNOMED CT distributes an RF2 archive that HAPI can import directly. Confirm your files match the FHIR R4 format before attempting any upload, since version mismatches cause silent failures that are hard to trace.

Mixing R4 and DSTU2/STU3 resources in the same upload batch is the most common cause of import errors at this stage.

Upload Using the FHIR REST API

Once your files are ready, use a standard HTTP PUT or POST request to load each CodeSystem or ValueSet into the server. The example below uploads a CodeSystem bundle using curl.

curl -X POST \

http://localhost:8080/fhir \

-H "Content-Type: application/fhir+json" \

-d @loinc-bundle.json

After each upload, confirm the resource loaded correctly by running a GET request against the resource endpoint:

curl http://localhost:8080/fhir/CodeSystem?url=http://loinc.org

A populated bundle response confirms the CodeSystem is indexed and ready to serve validation and expansion requests.

Step 3. Configure validation and remote terminology

With your terminology loaded, you need to tell your HAPI FHIR terminology server how to handle validation requests. By default, HAPI validates resources against whatever CodeSystems and ValueSets you've loaded locally, but you can also point it to a remote terminology service for codes you haven't imported yourself.

Configure Local Validation in application.yaml

Your primary validation settings live in the application.yaml file inside the HAPI JPA starter project. Open that file and set the following properties to enable resource validation against your local terminology content:

hapi:

fhir:

validation:

requests_enabled: true

responses_enabled: false

implementationguides:

enabled: true

Setting requests_enabled: true tells HAPI to validate incoming resources before storing them, which catches bad codes at write time rather than downstream in your clinical workflow. Keep responses_enabled: false unless you also need outbound resources validated, since enabling both adds measurable latency to every write operation.

Turn on response validation only after you've confirmed your upstream systems consistently send well-formed resources, otherwise you'll see a spike in rejected responses during initial testing.

Point to a Remote Terminology Service

When your local CodeSystems don't cover a code you need to validate, configure HAPI to delegate that lookup to a remote terminology server. Add the following block to your application.yaml to register a remote endpoint:

hapi:

fhir:

remote_terminology_validation_url: https://tx.fhir.org/r4

This setting routes any unresolved validation request to the configured endpoint before returning a failure to the calling system. That keeps your validation pipeline functional while you incrementally build out your local terminology content, rather than blocking integration work until every CodeSystem is fully imported.

Step 4. Verify with $lookup, $expand, and $validate-code

With your HAPI FHIR terminology server configured and terminology loaded, run the three core operations to confirm everything works as expected. These tests tell you whether your server resolves concepts correctly before you connect any upstream application or EPIC workflow to it.

Test $lookup First

The $lookup operation confirms your server can retrieve concept details by code and system. Run the request below against a LOINC code you know you loaded in Step 2:

curl "http://localhost:8080/fhir/CodeSystem/$lookup?\

system=http://loinc.org&code=8867-4"

A successful response returns the display name, system URL, and any designated properties for that code. If you get an "unknown code" error, your CodeSystem upload from Step 2 did not complete successfully, so re-check that import before moving forward.

A failed $lookup is almost always a symptom of a format mismatch or an incomplete upload, not a server configuration problem.

Test $expand on a ValueSet

$expand returns every code within a defined ValueSet, which is what EPIC calls when populating clinical UI dropdowns. Send this request to expand a ValueSet you loaded:

curl "http://localhost:8080/fhir/ValueSet/$expand?\

url=http://hl7.org/fhir/ValueSet/observation-status"

Confirm the response contains a populated expansion.contains array with individual code entries.

Test $validate-code Against a Known Value

Run $validate-code to confirm your server correctly accepts and rejects codes:

curl "http://localhost:8080/fhir/CodeSystem/$validate-code?\

system=http://loinc.org&code=8867-4"

Your response should include result: true for valid codes and result: false for invalid ones, confirming your validation layer is working correctly before any production traffic hits the server.

Wrap up and plan your production rollout

You now have a HAPI FHIR terminology server running, loaded with clinical terminologies, and verified through all three core operations. Before you push this to production, lock down two things: set your database connection pool size to handle concurrent validation requests without queuing, and put a reverse proxy like nginx in front of port 8080 to handle TLS termination. Run a load test against your $expand endpoint specifically, since large ValueSet expansions are the most common performance bottleneck under real clinical traffic.

From here, your roadmap includes setting up monitoring on response times, scheduling regular terminology package updates as LOINC and SNOMED CT release new versions, and documenting your CodeSystem inventory for any health system that audits your integration. If managing this infrastructure pulls your team away from your core product, deploy your EPIC integration without the infrastructure overhead and ship faster.